Table of Contents Show

How to easily get Perfect Setups in F1 Manager 2022, and detailed explanation of how it works.

Perfect Setups Guide (Setup Calculator)

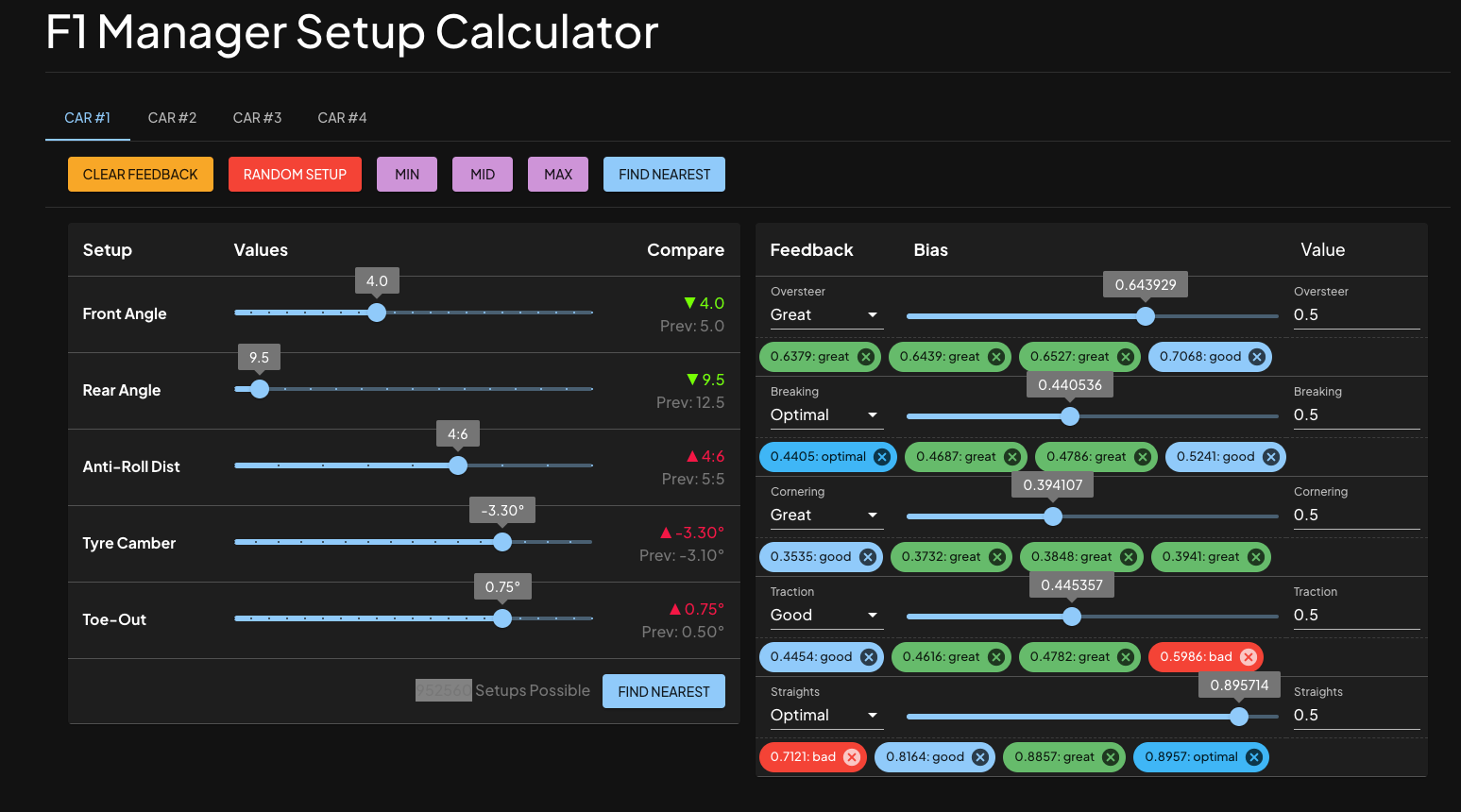

TL;DR: Website: https://f1setup.it/

If you are curious about how it works / why it would work, just scroll down, there will be some technical analysis in the following sections.

- 1. Pick your Current Practice Setup on the Left

- 2. Do a Practice Run, waiting for feedbacks.

- 3. Choose corresponding feedbacks after the run.

- 4. Click “FIND SETUP” to get a suggested setup, and start over.

- In 3-5 attempts it would get you 99%-100% setups!

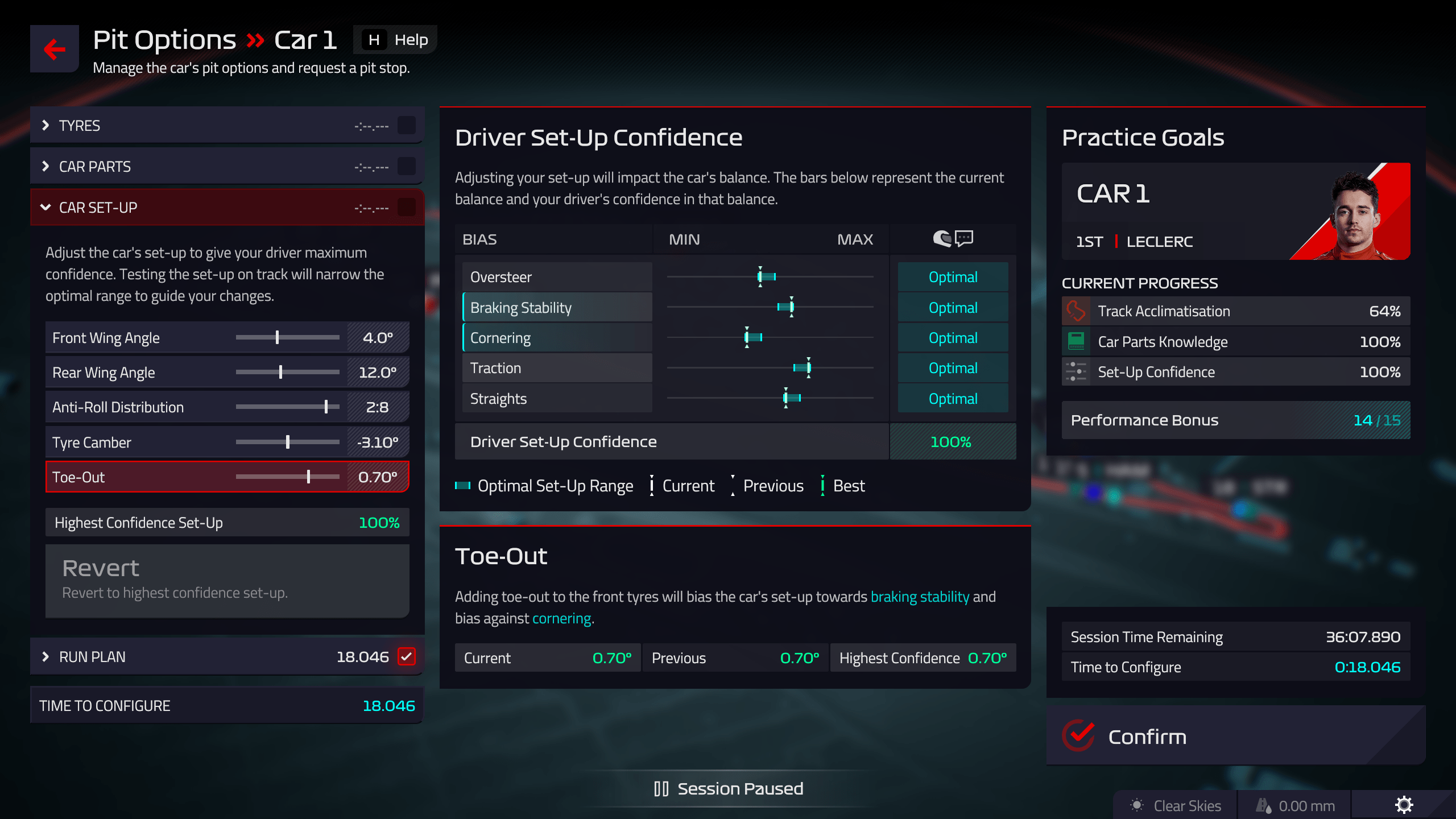

How Setup Works

Different setups could result in different car bias values, and better car setup could lead to a better performance, which could translate to race victories and championships.This table explains how a difference in setup could affect the outcomes.

| Setup | Oversteer | Braking Stability | Cornering | Traction | Straights |

| Front Wing Angle | + | – | + | – | – |

| Rear Wing Angle | – | + | + | + | – |

| Anti-Roll Distribution | – | + | – | + | |

| Tyre Camber | + | – | + | – | |

| Toe-Out | + | – |

Technical Details

How these setups work?

After lots of datapoints collected, we could figure out the resulting bias is a linear function of the input, which means if you shift twice the length, the resulting bias would also be exactly doubled, which is the foundation of the analysis.First we normalize the inputs and outputs to [0, 1] range, which means the leftmost is re-assigned value 0 and the rightmost is now 1. After lots of pixel-counting we got this table:

Initial Values:

| Setup | Oversteer | Braking Stability | Cornering | Traction | Straights |

| Left | 0.5 | 0.45 | 0.2 | 0.25 | 1 |

| Initial (Middle) | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| Right | 0.5 | 0.55 | 0.8 | 0.75 | 0 |

Coefficients:

| Setup | Oversteer | Braking Stability | Cornering | Traction | Straights |

| Front Wing Angle | 0.4 | -0.2 | 0.3 | -0.15 | -0.1 |

| Rear Wing Angle | -0.4 | 0.2 | 0.25 | 0.25 | -0.9 |

| Anti-Roll Distribution | -0.1 | 0.15 | -0.15 | 0.5 | |

| Tyre Camber | 0.1 | -0.25 | 0.25 | -0.1 | |

| Toe-Out | 0.2 | -0.05 |

Which means if we move right 1 unit of Front Wing Angle, we would have 0.4 more units of Oversteer, 0.2 less Breaking Stability, 0.3 more Cornering, 0.15 less Traction and 0.1 less Straight, etc.

Feedbacks and Confidence Values

But what about feedbacks?

Since we got the relationships between setups and biases, we could know the exact value of the bias for any given setup. After another huge amount of trials, I figured out that –

(which d is the difference to the perfect bias)

- optimal: d <= 0.007 (technically 39/5600, but 0.007 is between 39 and 40 so it would also work)

- great: d <= 0.04

- good: d <= 0.1

- bad: d > 0.1

Confidence Values?

- For each bias metric, -1% for 0.01 difference, which is capped at 20%

- So if it’s way too high/low for a specific metric, it would only count as -20%.

- Summing them up makes the whole 100%, which is the Confidence Value.

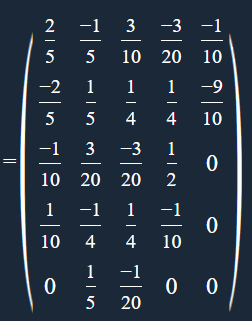

Conversion and Converting Back

What if I already have a desired bias, and wanting to know what I should do to get it?Based on linear algebra knowledges, basically the calculation of bias is technically matrix multiplication.

which 𝑴 =

And we have (** 𝑹: Bias Result / 𝑺: Setup)

𝑺𝑴 = 𝑹

and matrix product is invertible and associative, so we could have

𝑺𝑴(𝑴⁻¹) = 𝑹(𝑴⁻¹), which means 𝑹(𝑴⁻¹) = 𝑺

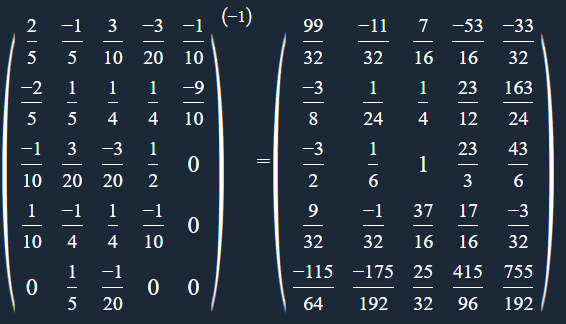

Since the matrix 𝑴 is Invertible, it’s easy to calculate 𝑴⁻¹ =

After all, since 𝑺 is discrete in-game, the solution is only theoretical and not directly usable, but it could give you a basic guidance which requires you to do some fine-tuning.But one good thing about being discrete: you could enumerate all possibilities, which is accurate and efficient since the possibilities are less than a million.

Conclusion

So what?

Since we already have some constraints, and we know how these setups work. The remaining thing is finding the right setups from all 952560 possibilities matching these constraints. Pick one from them, and try running again, the possibilities would be quickly reduced to the perfect one in 4-5 attempts, which is overwhelmingly effective. Additionally, you could do some manual runs and set an initial value, which could lead to even better results.

Happy managing!